Here are some key terms for this topic: Key Terms C7

GCSE Bitesize has revision pages (that take you through the topic), a test and an activity – click on Rates of Reaction. GCSE s-cool – Rates of Reaction – revision notes & animations, practise exam questions and a revision summary. Skoool Chemistry – click on ‘6. Rates of Reaction’ for excellent lessons that take you through the topic; also a glossary of key terms.

Chemactive worksheets and answers just click on ‘Rates questions’.

For Doc Brown’s Chemistry quizzes, word-fills, crosswords and study notes go here and just scroll down.

This is a great power-point that also revises C6 Energy changes in chemical reactions:

Chemical reactions from Carole Paquette

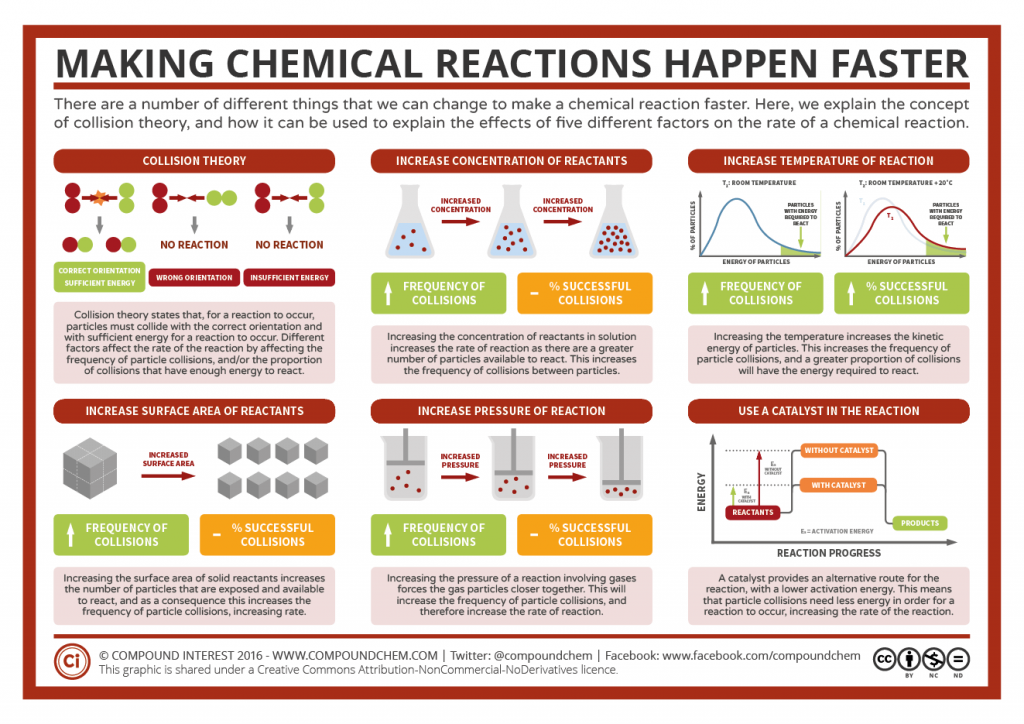

C71.1 RATE OF REACTION

Rate of reaction chemical kinetics (condensed) from jslayer

Science Bank (Reactions & Energy Changes): part 2 Reaction Rates (from 4m 40s) & part 3 Catalysts (from 9m 20s):

Five minutes on how to speed up chemical reactions (and get a date) from TED-Ed.

GCSE Bitesize – two videos – Rates of Reaction (3m 18s) or Rates of Reactions (4m 40s) & Bitesize Top 20 Science Demos – Rates of Reaction (5m 51s).

C7.2 REDOX

IGCSE Chemistry, a 25m lesson from ChemistryKlipz covers redox rcts (to 6m 20s):

BBC Short Circuit Redox Changes – a 19m video.